Build Citations in AI Models: A Technical Framework for B2B

Build citations in AI models with a proven B2B technical framework covering entity clarity, content architecture, and off-site authority.

When a procurement manager asks ChatGPT "what's the best enterprise data integration platform," your brand either shows up or it doesn't. There's no page two. There's no "close enough." The model either cites you or recommends a competitor, and that recommendation carries serious purchasing weight.

This is the new reality for B2B marketing teams. Building citations in AI models isn't a theoretical exercise. It's a measurable, technical discipline that determines whether your brand participates in AI-driven discovery or gets quietly left behind.

At Index Lab, we've developed a structured approach to this challenge. Here's the framework we use with B2B clients.

Why AI Citations Work Differently from Traditional Backlinks

Traditional SEO citations (backlinks from authoritative domains) tell Google that your content is trustworthy. AI model citations work on a related but distinct logic. Large language models like ChatGPT, Gemini, and Perplexity don't crawl the web in real time the same way Google does. They learn from corpora of text, third-party mentions, structured data, and high-authority sources that consistently describe a brand in specific, consistent language.

The implication for B2B teams is significant. Ranking on page one of Google doesn't automatically translate into being cited by an AI model. A brand with moderate domain authority but exceptional definitional clarity and cross-platform mentions can outperform larger competitors in AI-generated responses.

What AI Models Actually Look For

Three signals drive AI citation frequency more than anything else:

Consistent entity description: Your brand name, category, and core value proposition appear in the same language across your website, press mentions, directories, and third-party content.

Authoritative source mentions: Industry publications, analyst reports, and credible review platforms reference your brand in context (not just as a passing mention).

Structured, question-answering content: Pages that directly answer the questions buyers ask AI tools are far more likely to be incorporated into model responses.

This is why a technically sound B2B citation strategy for AI models has to span content, technical infrastructure, and off-site credibility simultaneously.

The Technical Framework: Three Layers That Drive AI Visibility

Layer 1: Entity Clarity and Structured Data

AI models build a picture of your brand from fragmented signals. Your job is to make that picture coherent and unambiguous. Start with schema markup. For B2B companies, Organization schema with a complete sameAs array (linking your brand entity to LinkedIn, Wikidata, Crunchbase, and relevant industry databases) gives AI systems a clean, verifiable identity to work with.

Beyond schema, conduct an entity audit across your digital footprint. Ask: does every platform that mentions your brand describe what you do in consistent language? Inconsistency in how your category, use cases, and differentiators are described creates noise that suppresses citation frequency.

Layer 2: Content Architecture for AI Comprehension

Most B2B content is written for human readers browsing linearly. AI models consume content differently. They extract factual claims, categorical associations, and direct answers to specific questions.

Restructure key pages around question-answer pairs that mirror how buyers phrase queries on ChatGPT or Perplexity. For example, if you sell supply chain software, a page section titled "How does [Brand] reduce procurement cycle time?" with a specific, data-backed answer is far more useful to an AI model than a generic paragraph about "streamlining operations."

Content Type | AI Citation Potential | Optimization Priority |

|---|---|---|

FAQ pages with specific answers | High | Immediate |

Category definition pages | High | Immediate |

Case studies with metrics | Medium-High | Short-term |

Thought leadership articles | Medium | Ongoing |

Generic marketing copy | Low | Deprioritize |

Layer 3: Off-Site Authority and Third-Party Mentions

AI models weight third-party corroboration heavily. A brand that only describes itself is far less credible to a model than one described consistently by analysts, journalists, review platforms, and partners.

For B2B brands, the highest-leverage off-site citation sources include:

Industry analyst coverage (Gartner, Forrester, IDC, and regional equivalents)

G2, Capterra, and TrustRadius profiles with detailed, keyword-rich reviews

Partner and integration directory listings with accurate category descriptions

Trade publication bylines and product features

Podcast appearances and interview transcripts published on credible domains

The goal isn't volume. A handful of high-quality, contextually relevant mentions from authoritative sources outperforms dozens of low-quality directory submissions.

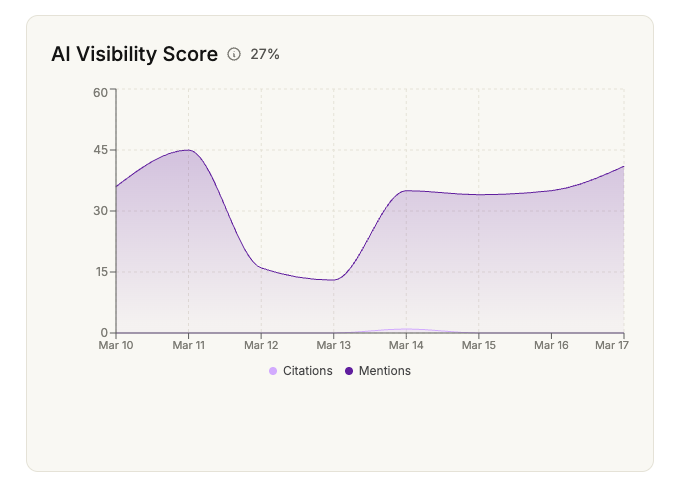

Measuring AI Citation Performance

One of the most common questions we hear from marketing directors is: "How do we know if this is working?" The short answer is that you have to monitor AI outputs directly, systematically, and across multiple platforms.

Building a Citation Monitoring Process

Set up a structured query testing protocol. Identify 20-30 queries that your buyers realistically ask AI tools, mapped to your product categories and use cases. Run these queries weekly across ChatGPT, Gemini, and Perplexity. Track whether your brand is mentioned, how it's described, and which competitors appear alongside you.

This isn't a one-time audit. AI citation patterns shift as models update and as the information landscape around your brand evolves. Treat this as an ongoing monitoring function, not a project with an end date.

The Metrics That Matter

Citation frequency: Percentage of relevant queries where your brand appears

Citation accuracy: Whether the model describes your brand correctly and favorably

Competitive share of voice: How your citation rate compares to named competitors

Traffic quality from AI sources: Conversion rates from visitors who arrive via AI referral links (particularly relevant on Perplexity)

At Index Lab, clients who implement this full framework consistently see significant improvements across these metrics within three to six months. The brands that move fastest are those that treat AI model citation building as a dedicated workstream with clear ownership, not a side project sitting inside an existing SEO workflow.

If you want to understand where your brand currently stands across major AI platforms, our team at Index Lab runs detailed citation audits that show exactly which queries you're winning and where competitors are capturing recommendations you should own.

Conclusion

The technical framework for building citations in AI models comes down to three connected layers: entity clarity, content architecture, and off-site credibility. None of these layers works in isolation. A brand with perfect schema markup but inconsistent third-party mentions will underperform. A brand with strong analyst coverage but poorly structured website content will miss citations it should be earning.

B2B marketing teams that get this right early have a meaningful advantage. AI-generated recommendations influence real purchasing decisions across global markets, and the brands cited most consistently are those that invested in the groundwork before competitors recognized the shift.

This isn't the future of search. It's happening now. Learn more about how we approach AI search optimization at Index Lab and what a structured program looks like for B2B brands at different stages of maturity.

Frequently Asked Questions

How long does it take to start appearing in AI model citations?

Most B2B brands see measurable improvements in citation frequency within three to six months of implementing a structured program. The timeline depends on the starting point: brands with strong existing authority but poor entity clarity can move quickly, while brands starting from a lower baseline may need six to twelve months of consistent off-site authority building. Technical changes like schema implementation and content restructuring can influence citations relatively fast, while third-party mention building takes longer to compound.

Do we need to optimize separately for ChatGPT, Gemini, and Perplexity?

The foundational signals that drive citations (entity clarity, authoritative mentions, question-answering content) work across all major AI platforms. That said, each platform has nuances. Perplexity is more real-time and sources-forward, so strong indexed content matters more there. Gemini integrates deeply with Google's knowledge graph, making structured data and Google Business signals particularly relevant. ChatGPT leans heavily on training data quality and third-party corroboration. A well-executed program addresses all three, with platform-specific monitoring to track performance separately.

Can a B2B brand with low domain authority still build meaningful AI citations?

Yes. AI citation building isn't purely a domain authority game. Brands with moderate domain authority but strong entity clarity, specific use-case content, and credible third-party mentions in niche industry publications can outperform larger competitors with weaker definitional presence. The key is focusing on the quality of contextual mentions rather than trying to compete on raw link volume. For B2B companies in specialized verticals, a handful of citations from the right analyst reports or trade publications can have a disproportionate impact on AI model responses.

Related articles

How Your Lua Article Was Created (And Why It Shows Up in AI Search)

Learn how articles show up in AI search through strategic optimization. Discover why 90% of content gets ignored by AI models and what makes articles AI-visible.

Why Most Blog Content Is Invisible to AI in 2026 (And How to Fix It)

Why blog content is invisible to AI systems like ChatGPT and how to fix it. Learn the key differences between traditional SEO and AI optimization.